News Story

Simon, Lau to investigate neural bases of natural language understanding

Jonathan Simon and Ellen Lau

“Neural representations of continuous speech and linguistic context in native and non-native listeners” is one of six FY19 seed grants newly issued by the Brain and Behavior Initiative (BBI) at the University of Maryland. Now in its fourth year, the program has awarded a total of $1.3M to 21 projects; in turn, these projects have netted just under $11M in external awards.

Assistant Professor Ellen Lau of the Linguistics Department; and Professor Jonathan Simon, who has a joint appointment in Electrical and Computer Engineering, Biology and the Institute for Systems Research; are the principal investigators (PIs) for the $50,000 seed grant.

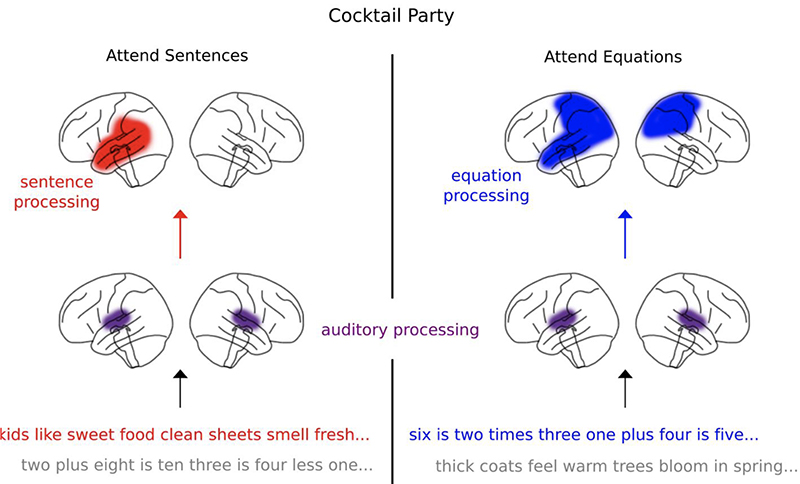

The project will develop an innovative approach to investigating the neural bases of natural language understanding in native and non-native speakers. There are two primary aims. First, the researchers will investigate central questions about the interaction of top-down predictions and bottom-up input (a focus of Lau’s group) by leveraging a novel analytic approach for MEG analysis of continuous speech (developed by Simon’s group). Second, the researchers will apply this method to characterizing both bottom-up and top-down processing differences between native and non-native speakers during comprehension of continuous speech.

The main questions the research will be answering are:

- Does language processing affect acoustic processing? In other words, does the expectation of a semantically probable word make that word sound different?

- How does real-time neural language processing change—or does it change—when that language was learned later in life?

“The first question is an example of investigating neural networks that use genuine feedback circuitry, not just traditional feedforward architecture,” Simon explains. “The second is an example of the direction of a real-world application in which we’d like to take the research.”

Because of the central role of context in language comprehension, resolving the form of its real time interaction with bottom-up input representations will have broad implications for neurocognitive models of speech recognition.

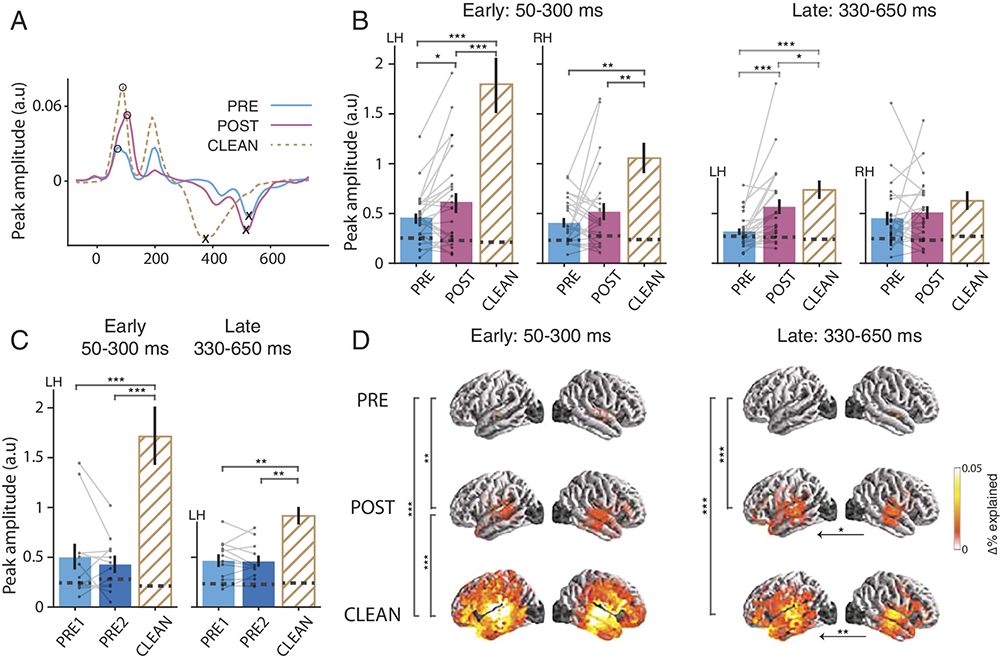

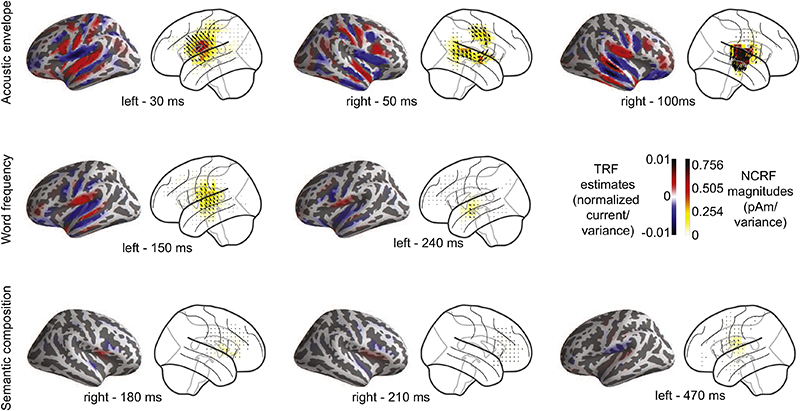

This project will build on 2018 research by Simon, ISR Postdoctoral Researcher Christian Brodbeck, and L. Elliot Hong of the University of Maryland School of Medicine. “Rapid transformation from auditory to linguistic representations of continuous speech” was published in the Cell Press/Elsevier journal Current Biology. The paper relates how the researchers were able to see where in the brain, and how quickly—in milliseconds—the brain’s neurons transition from processing the sound of speech to processing the language-based words of the speech.

In the current project, Simon and Lau have very different and unique sets of expertise, that, only when combined, span the breadth of this research proposal. Lau has an understanding of higher-level syntactic and semantic processing and neural responses to top-down contextual prediction in language. Simon brings expertise in auditory neuroscience and signal processing, culminating in an innovative new methodology for identifying neural responses to continuous speech. The temporal response function analysis method for MEG data developed by him and others can separate and evaluate the effect of acoustic, phonological, and lexical variables from a relatively brief recording session in which participants simply listen to a story or text.

The researchers believe it would be impossible for either of them to do this cutting-edge work without the expertise that the other brings. Simon notes that it is the BBI Seed Grant program that has facilitated this brand-new collaboration. “It accelerated my conversations with Dr. Lau from informal discussions of potential common interests to planning out the details of proposed experiments and deciding on the methodologies we will use to analyze the results.”

Published May 16, 2019