News Story

A nerve modeled on a battery

Based on a News Release by Taylor Kabota, Stanford University

For all the improvements in computer technology over the years, we still struggle to recreate the low-energy, elegant processing of the human brain. Now, researchers with the Nanostructures for Electrical Energy Storage Energy Frontier Research Center have made an advance that could help computers mimic one piece of the brain’s efficient design – an artificial version of the space over which neurons communicate, called a synapse.

"More and more, the kinds of tasks that we expect our computing devices to do require computing that mimics the brain because using traditional computing to perform these tasks is becoming really power hungry," said A. Alec Talin, distinguished member of technical staff at Sandia National Laboratories in Livermore, California; a principal investigator on the NEES project; and senior author of the paper. "We’ve demonstrated a device that’s ideal for running these type of algorithms and that consumes a lot less power."

The new artificial synapse, reported in the Feb. 20 issue of Nature Materials, mimics the way synapses in the brain learn through the signals that cross them. This is a significant energy savings over traditional computing, which involves separately processing information and then storing it into memory. Here, the processing creates the memory.

"It works like a real synapse but it’s an organic electronic device that can be engineered," said Alberto Salleo, associate professor of materials science and engineering at Stanford and senior author of the paper. "It’s an entirely new family of devices because this type of architecture has not been shown before. For many key metrics, it also performs better than anything that’s been done before with inorganics."

This synapse may one day be part of a more brain-like computer, which could be especially beneficial for computing that works with visual and auditory signals. Examples of this are seen in voice-controlled interfaces and driverless cars. Past efforts in this field have produced high-performance neural networks supported by artificially intelligent algorithms but these are still distant imitators of the brain that depend on energy-consuming traditional computer hardware.

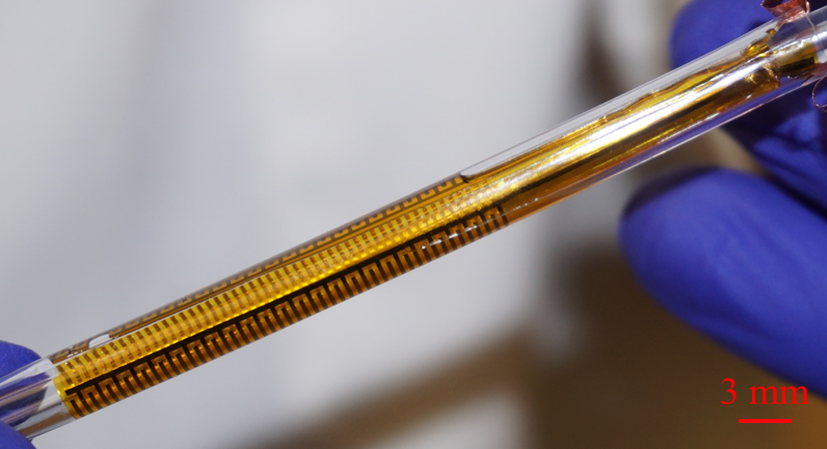

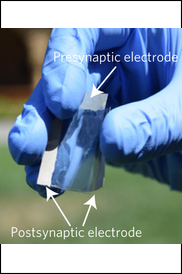

The artificial synapse is based off a battery design. It consists of two thin, flexible films with three terminals, connected by an electrolyte of salty water. The device works as a transistor, with one of the terminals controlling the flow of electricity between the other two.

Like a neural path in a brain being reinforced through learning, the researchers program the artificial synapse by discharging and recharging it repeatedly. Through this training, they have been able to predict within 1 percent of uncertainly what voltage will be required to get the synapse to a specific electrical state and, once there, it remains at that state. In other words, unlike a common computer, where you save your work to the hard drive before you turn it off, the artificial synapse can recall its programming without any additional actions or parts.

Talin and his gradate student Elliott Fuller are part of the Nanostructures for Electrical Energy Storage project, which aims to create new types of batteries based on nanostructure and nanoarchitecture. NEES is headquartered at the University of Maryland, with Sandia’s Talin as one of the twenty principal investigators on the project.

In the same issue of Nature Materials, researchers not related to the project review the work. "ENODe, however, obviates [the voltage time dilemma which states that that low-energy switching and long retention times cannot be achieved simultaneously] with its unique switching mechanism — similar to charging or discharging a battery," say J. Joshua Yang and Qiangfei Xia of the University of Massachusetts.

Building a brain

When we learn, electrical signals are sent between neurons in our brain. The most energy is needed the first time a synapse is traversed. Every time afterward, the connection requires less energy. This is how synapses efficiently facilitate both learning something new and remembering what we’ve learned. The artificial synapse, unlike most other versions of brain-like computing, also fulfills these two tasks simultaneously, and does so with substantial energy savings.

"Deep learning algorithms are very powerful but they rely on processors to calculate and simulate the electrical states and store them somewhere else, which is inefficient in terms of energy and time," said Yoeri van de Burgt, former postdoctoral scholar in the Salleo lab and lead author of the paper. "Instead of simulating a neural network, our work is trying to make a neural network."

Only one artificial synapse has been produced but researchers at Sandia used 15,000 measurements from experiments on that synapse to simulate how an array of them would work in a neural network. They tested the simulated network’s ability to recognize handwriting of digits 0 through 9. Tested on three datasets, the simulated array was able to identify the handwritten digits with an accuracy between 93 to 97 percent.

Although this task would be relatively simple for a person, traditional computers have a difficult time interpreting visual and auditory signals.

This device is extremely well suited for the kind of signal identification and classification that traditional computers struggle to perform. Whereas digital transistors can be in only two states, such as 0 and 1, the researchers successfully programmed 500 states in the artificial synapse, which is useful for neuron-type computation models. In switching from one state to another they used about one-tenth as much energy as a state-of-the-art computing system needs in order to move data from the processing unit to the memory.

This, however, means they are still using about 10,000 times as much energy as the minimum a biological synapse needs in order to fire. The researchers are hopeful that they can attain neuron-level energy efficiency once they test the artificial synapse in smaller devices.

Organic potential

Every part of the device is made of inexpensive organic materials. These aren’t found in nature but they are largely composed of hydrogen and carbon and are compatible with the brain’s chemistry. Cells have been grown on these materials and they have even been used to make artificial pumps for neural transmitters. The voltages applied to train the artificial synapse are also the same as those that move through human neurons.

All this means it’s possible that the artificial synapse could communicate with live neurons, leading to improved brain-machine interfaces. The softness and flexibility of the device also lends itself to being used in biological environments. Before any applications to biology, however, the team plans to build an actual array of artificial synapses for further research and testing.

This research was funded by the U.S. Department of Energy, the National Science Foundation, the Keck Faculty Scholar Funds, the Neurofab at Stanford, the Stanford Graduate Fellowship, Sandia’s Laboratory-Directed Research and Development Program, , the Holland Scholarship, the University of Groningen Scholarship for Excellent Students, the Hendrik Muller National Fund, the Schuurman Schimmel-van Outeren Foundation, the Foundation of Renswoude (The Hague and Delft), the Marco Polo Fund, the Instituto Nacional de Ciência e Tecnologia/Instituto Nacional de Eletrônica Orgânica in Brazil, the Fundação de Amparo à Pesquisa do Estado de São Paulo and the Brazilian National Council.

A non-volatile organic electrochemical device as a low-voltage artificial synapse for neuromorphic computing, van de Burgt, et al. Nature Materials (2017) doi:10.1038/nmat4856

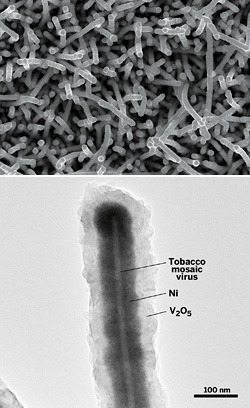

Special note: Alec Talin has collaborated with ISR Director Reza Ghodssi and his research group on NEES research. They published the following paper in November 2016:

H. Jung, K. Gerasopoulos, A. A. Talin, and R. Ghodssi, "In situ characterization of charge rate dependent stress and structure changes in V2O5 cathode prepared by atomic layer deposition," Journal of Power Sources, vol. 340, pp. 89-97, November 2016.

Published February 27, 2017